LLM Evaluation

Benchmark GPT, Claude, Gemini, and custom models on strategic reasoning, spatial awareness, and multi-step planning.

RL Training

Full Gymnasium environment with multi-discrete action space, configurable reward shaping, and headless mode for fast training.

Tournaments

Automated round-robin tournaments with ELO ratings, replay recording, and detailed performance analytics.

Diverse Tactical Maps

25 hand-crafted maps across 1v1, 1v1v1, and 2v2 formats with varied terrain, chokepoints, and strategic objectives.

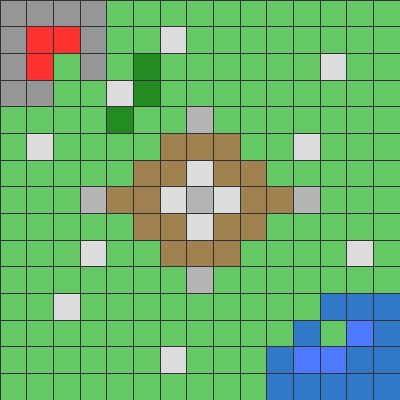

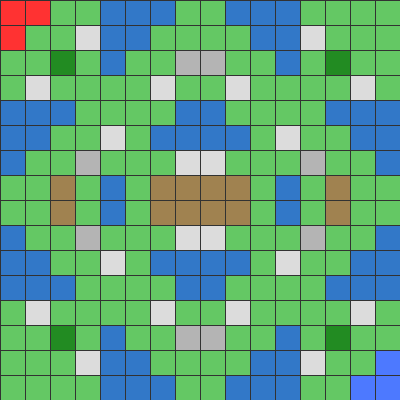

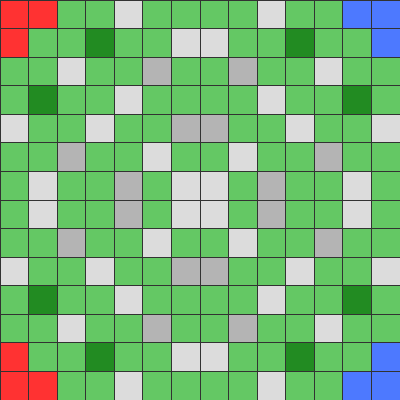

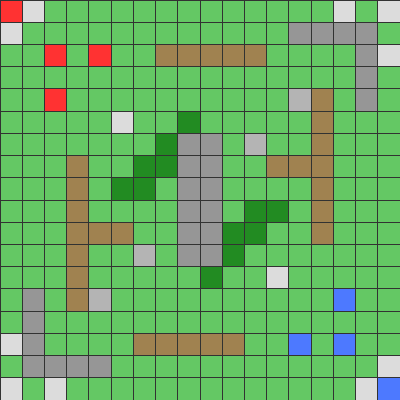

Crossroads

Crossroads Island Fortress

Island Fortress Tower Rush

Tower Rush Center Mountains

Center Mountains8 Unique Unit Types

Each unit has distinct stats, abilities, and roles — creating a rich decision space for AI agents to master.

How It Works

Install

Install via pip with optional GPU, GUI, and LLM extras. Works on Python 3.10+.

pip install reinforcetactics[llm]Configure

Pick your agents — LLM bots, RL models, rule-based bots, or your own custom agent.

--agents gpt-4o claude-sonnetCompete

Run tournaments, compare ELO ratings, analyze replays, and iterate on your models.

python -m reinforcetactics tournamentBuilt for AI Research

Gymnasium Compatible

Standard RL interface with observation and action spaces, reward shaping, and episode management.

Multi-Agent Support

PettingZoo integration for multi-agent RL. Train cooperative and competitive policies.

Replay & Analysis

Record battles, export to video, and analyze decision patterns for model interpretability.

Extensible Architecture

Add custom units, maps, reward functions, and AI agents with a clean Python API.

Multiple AI Backends

OpenAI, Anthropic, and Google Gemini SDKs built-in. Plug in any LLM via API.

Docker Tournaments

Containerized tournament runner for reproducible benchmarks at scale.

Ready to benchmark your AI?

Open source and ready for research. Clone the repo and run your first tournament in minutes.